Versuchsplanung

Leitung: Prof. Dr. Holger Dette

Mitglieder:

- Robin Solinus

Beschreibung:

In vielen Fällen kann die Effizienz von statistischen Untersuchungen durch eine optimale Wahl der Versuchsbedingungen deutlich verbessert werden. Typische Beispiele sind die Verteilung von Probanden in einer klinischen Studie auf verschiedene Therapieformen oder die Bestimmung einer Dosiswirkungskurve eines neuen Medikamentes, in der Probanden unter verschiedenen Dosierungen behandelt werden können. In der Arbeitsgruppe "Optimale Versuchsplanung" werden mathematische Verfahren entwickelt, um für solche und andere Fragestellungen optimale Versuchspläne zu bestimmen. Diese Probleme lassen sich mathematisch als Optimierungsprobleme für nichtlineare Funktionale mit unendlich dimensionalem Definitionsbereich formulieren. Das Ziel der Forschungsarbeiten besteht dann in der Charakterisierung von Lösungen solcher Probleme.

Functional Data

Lead: Prof. Dr. Holger Dette

Members:

- Dr. Lujia Bai

- Dr. Marius Kroll

- Dr. Sebastian Kühnert

- Pascal Quanz

- Bufan Li

Descriptions:

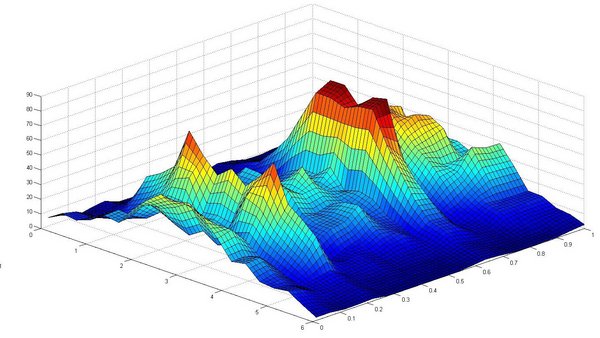

In statistical practice, it is often of interest to study the development of a certain quantity as a function of time. Discretely observed, such data sets are called time series. Typical examples can be found, for example, in empirical economics (development of prices) or in the life sciences (growth of a living being), and even simple situations suggest that the investigation of such time series methodologically goes well beyond the classical case of independently, identically distributed random variables. In the case of independence, this is almost always the case, since the value or increment of the time series usually depends strongly on those in the immediate past. To refrain from the identical distribution is less inevitable, since it is often appropriate to work with models that assume a homogeneous ("stationary") behavior in time. However, models also exist that incorporate periods of stronger and weaker growth, or more generally (such as annual) periodic variations in parameters such as means or quantiles. Analyzing these different facets of the periodicity of a time series is a main focus of the working group "Time Series". In addition, we develop procedures for model validation, i.e. we check to what extent a specific model is appropriate for a concrete time series. Methodologically, we proceed by defining appropriate distance measures and building statistical tests on empirical versions that are as elegant as possible.

Inverse problems

Lead: Prof. Dr. Nicolai Bissantz

Members:

- Dr. Patrick Bastian

Description:

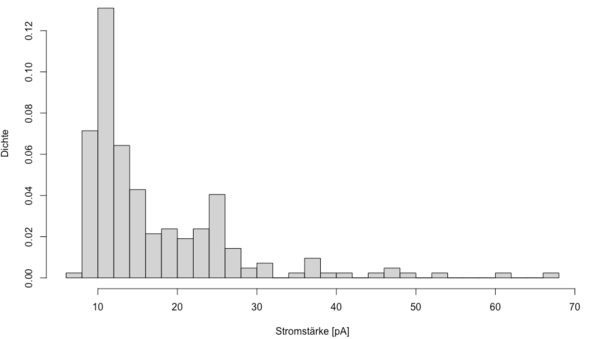

An inverse problem exists when the quantity of interest to the observer is not directly measurable. A typical example is that of convolution, which occurs, for example, in optical observations with telescopes in astronomy. Basic physical properties of light propagation and diffraction on surfaces such as the mirrors and lenses of the telescope lead here to the fact that a point object is not observed as a point, but in the form of a kind of slice. This "smearing" of the image can be represented mathematically as a convolution of the true image with the so-called point spread function. The reconstruction of the true image from the observed, convolved image is thus an inverse problem. In the reconstruction of the image, the problem characteristic for inverse problems arises that observation errors can lead to arbitrarily large errors in the reconstructed image. Dealing with this problem is at the heart of statistical problems in inverse problems. In the inverse problems group we are concerned with statistical methods for reconstructing such data (in particular for the convolution case) and with statistical inference for such problems.

Random matrices

Lead:

- Prof. Dr. Holger Dette

Members:

- Thomas Lam

Description:

In probability theory and statistics, a random matrix is a matrix-valued random variable. Many important properties in physics are mathematically modeled by random matrix models (e.g. the thermal conductivity of a crystalline solid or disordered physical systems). In the group, properties of the random spectrum of such matrices at increasing dimension are studied. Of particular interest are relations to asymptotic zero distributions of orthogonal polynomials, which have similar properties as the eigenvalue distributions of random matrices.

Model selection and goodness-of-fit

Lead: Prof. Dr. Nicolai Bissantz

Members:

- Dr. Lujia Bai

- Dr. Patrick Bastian

- Dr. Marius Kroll

- Pascal Quanz

- Bufan Li

Description:

With real data sets, it is usually not clear how the data were generated. However, the statistician is faced with the task of fitting a model to the data that best reflects the main characteristics and allows the most accurate predictions possible. A model selection procedure assists the statistician in deciding on the best-fitting model prior to the actual data analysis. A typical problem solved by model selection is the identification of relevant covariates in a regression model. For example, for a given biological trait, it is of interest to find out which genes have an influence on the phenotype expression. Model selection procedures can be used to identify the relevant genes and then describe the relationship between these genes and phenotype expression.

Goodness-of-fit tests can be used to compare different statistical methods with respect to the goodness of their fit to the data. For example, once relevant genes are identified, a linear curve and a non-parametric curve can be fitted to predict phenotype expression for a given genetic profile. Which method has advantages can be determined by testing for parametric shape.

In the working group "Model selection and goodness-of-fit", more classical model selection methods are developed and their properties are investigated, as well as more modern methods, such as penalized parametric regression. New tests for the comparison of regression curves are also developed.

Differential Privacy

Lead: Prof. Dr. Holger Dette

Members:

- Dr. Önder Askin

- Dr. Martin Dunsche

- Dr. Carina Graw

Description:

Statistics often involves working with sensitive data. This results in the danger that the privacy of individuals is endangered from statistical analyses. Typical approaches such as anonymization have become less important due to the rapid advancement of artificial intelligence, and a new method of privacy has emerged, namely Differential Privacy. Conceptually, Differential Privacy guarantees an individual cannot be identified as a participant in a data analysis (e.g., statistics, machine learning). The basis of success is the mathematical formalization and further development of this concept. The working group "Differential Privacy" is interested in whether previous statistical methods remain applicable in the context of differential privacy. Exemplarily, one is interested in the interaction of privacy and statistical accuracy.